2014:Symbolic Melodic Similarity Results

Introduction

These are the results for the 2014 running of the Symbolic Melodic Similarity task set. For background information about this task set please refer to the 2014:Symbolic Melodic Similarity page.

Each system was given a query and returned the 10 most melodically similar songs from those taken from the Essen Collection (5274 pieces in the MIDI format; see ESAC Data Homepage for more information). For each query, we made four classes of error-mutations, thus the set comprises the following query classes:

- 0. No errors

- 1. One note deleted

- 2. One note inserted

- 3. One interval enlarged

- 4. One interval compressed

For each query (and its 4 mutations), the returned results (candidates) from all systems were then grouped together (query set) for evaluation by the human graders. The graders were provide with only heard perfect version against which to evaluate the candidates and did not know whether the candidates came from a perfect or mutated query. Each query/candidate set was evaluated by 1 individual grader. Using the Evalutron 6000 system, the graders gave each query/candidate pair two types of scores. Graders were asked to provide 1 categorical score with 3 categories: NS,SS,VS as explained below, and one fine score (in the range from 0 to 100).

Evalutron 6000 Summary Data

Number of evaluators = 6

Number of evaluations per query/candidate pair = 1

Number of queries per grader = 1

Total number of unique query/candidate pairs graded = 436

Average number of query/candidate pairs evaluated per grader: 73

Number of queries = 6 (perfect) with each perfect query error-mutated 4 different ways = 30

General Legend

| Sub code | Submission name | Abstract | Contributors |

|---|---|---|---|

| JU1 | ShapeH | Julián Urbano | |

| JU2 | ShapeTime | Julián Urbano | |

| JU3 | Time | Julián Urbano | |

| YO1 | YOkuboSMS | Yoshiaki OKUBO |

Broad Categories

NS = Not Similar

SS = Somewhat Similar

VS = Very Similar

Table Headings

ADR = Average Dynamic Recall

NRGB = Normalize Recall at Group Boundaries

AP = Average Precision (non-interpolated)

PND = Precision at N Documents

Calculating Summary Measures

Fine(1) = Sum of fine-grained human similarity decisions (0-100).

PSum(1) = Sum of human broad similarity decisions: NS=0, SS=1, VS=2.

WCsum(1) = 'World Cup' scoring: NS=0, SS=1, VS=3 (rewards Very Similar).

SDsum(1) = 'Stephen Downie' scoring: NS=0, SS=1, VS=4 (strongly rewards Very Similar).

Greater0(1) = NS=0, SS=1, VS=1 (binary relevance judgment).

Greater1(1) = NS=0, SS=0, VS=1 (binary relevance judgment using only Very Similar).

(1)Normalized to the range 0 to 1.

Summary Results

Overall Scores (Includes Perfect and Error Candidates)

| SCORE | JU1 | JU2 | JU3 | YO1 |

|---|---|---|---|---|

| ADR | 0.7089 | 0.7962 | 0.7997 | 0.6912 |

| NRGB | 0.6786 | 0.7493 | 0.7602 | 0.6378 |

| AP | 0.7344 | 0.7534 | 0.7992 | 0.5535 |

| PND | 0.7361 | 0.7444 | 0.7611 | 0.5611 |

| Fine | 53.7767 | 54.5967 | 51.1933 | 36.9633 |

| PSum | 1.1167 | 1.13 | 1.1267 | 0.69 |

| WCSum | 1.5033 | 1.5133 | 1.5433 | 0.93667 |

| SDSum | 1.89 | 1.8967 | 1.96 | 1.1833 |

| Greater0 | 0.73 | 0.74667 | 0.71 | 0.44333 |

| Greater1 | 0.38667 | 0.38333 | 0.41667 | 0.24667 |

Scores by Query Error Types

No Errors

| SCORE | JU1 | JU2 | JU3 | YO1 |

|---|---|---|---|---|

| ADR | 0.7089 | 0.7962 | 0.7997 | 0.6912 |

| NRGB | 0.6786 | 0.7493 | 0.7602 | 0.6378 |

| AP | 0.7344 | 0.7534 | 0.7992 | 0.5535 |

| PND | 0.7361 | 0.7444 | 0.7611 | 0.5611 |

| Fine | 58.2333 | 59.0667 | 53.3 | 38.3 |

| PSum | 1.2167 | 1.2333 | 1.1833 | 0.71667 |

| WCSum | 1.6167 | 1.6333 | 1.6167 | 0.96667 |

| SDSum | 2.0167 | 2.0333 | 2.05 | 1.2167 |

| Greater0 | 0.81667 | 0.83333 | 0.75 | 0.46667 |

| Greater1 | 0.4 | 0.4 | 0.43333 | 0.25 |

Note Deletions

| SCORE | JU1 | JU2 | JU3 | YO1 |

|---|---|---|---|---|

| ADR | 0.6419 | 0.8038 | 0.7728 | 0.5673 |

| NRGB | 0.6268 | 0.7623 | 0.7349 | 0.5436 |

| AP | 0.6510 | 0.7414 | 0.7364 | 0.4675 |

| PND | 0.6125 | 0.7139 | 0.6889 | 0.4694 |

| Fine | 57.1 | 58.2667 | 53.65 | 34.6167 |

| PSum | 1.2167 | 1.2333 | 1.1833 | 0.63333 |

| WCSum | 1.65 | 1.6667 | 1.6333 | 0.86667 |

| SDSum | 2.0833 | 2.1 | 2.0833 | 1.1 |

| Greater0 | 0.78333 | 0.8 | 0.73333 | 0.4 |

| Greater1 | 0.43333 | 0.43333 | 0.45 | 0.23333 |

Note Insertions

| SCORE | JU1 | JU2 | JU3 | YO1 |

|---|---|---|---|---|

| ADR | 0.6550 | 0.7943 | 0.8162 | 0.5889 |

| NRGB | 0.6326 | 0.7343 | 0.8015 | 0.5854 |

| AP | 0.6940 | 0.7282 | 0.7750 | 0.5167 |

| PND | 0.7361 | 0.6611 | 0.7361 | 0.5444 |

| Fine | 55.85 | 57.4667 | 53.05 | 37.35 |

| PSum | 1.15 | 1.1833 | 1.15 | 0.68333 |

| WCSum | 1.5333 | 1.5667 | 1.5833 | 0.93333 |

| SDSum | 1.9167 | 1.95 | 2.0167 | 1.1833 |

| Greater0 | 0.76667 | 0.8 | 0.71667 | 0.43333 |

| Greater1 | 0.38333 | 0.38333 | 0.43333 | 0.25 |

Enlarged Intervals

| SCORE | JU1 | JU2 | JU3 | YO1 |

|---|---|---|---|---|

| ADR | 0.6807 | 0.7895 | 0.7727 | 0.6957 |

| NRGB | 0.6378 | 0.7304 | 0.7135 | 0.6360 |

| AP | 0.6694 | 0.7299 | 0.7296 | 0.5757 |

| PND | 0.6278 | 0.7389 | 0.7000 | 0.5917 |

| Fine | 45.4667 | 45.4167 | 47.0167 | 33.9833 |

| PSum | 0.91667 | 0.91667 | 1.0167 | 0.61667 |

| WCSum | 1.25 | 1.25 | 1.3667 | 0.83333 |

| SDSum | 1.5833 | 1.5833 | 1.7167 | 1.05 |

| Greater0 | 0.58333 | 0.58333 | 0.66667 | 0.4 |

| Greater1 | 0.33333 | 0.33333 | 0.35 | 0.21667 |

Compressed Intervals

| SCORE | JU1 | JU2 | JU3 | YO1 |

|---|---|---|---|---|

| ADR | 0.6125 | 0.7076 | 0.7076 | 0.5701 |

| NRGB | 0.5579 | 0.6655 | 0.6655 | 0.5486 |

| AP | 0.6407 | 0.6711 | 0.6247 | 0.4728 |

| PND | 0.5833 | 0.6250 | 0.6250 | 0.4306 |

| Fine | 52.2333 | 52.7667 | 48.95 | 40.5667 |

| PSum | 1.0833 | 1.0833 | 1.1 | 0.8 |

| WCSum | 1.4667 | 1.45 | 1.5167 | 1.0833 |

| SDSum | 1.85 | 1.8167 | 1.9333 | 1.3667 |

| Greater0 | 0.7 | 0.71667 | 0.68333 | 0.51667 |

| Greater1 | 0.38333 | 0.36667 | 0.41667 | 0.28333 |

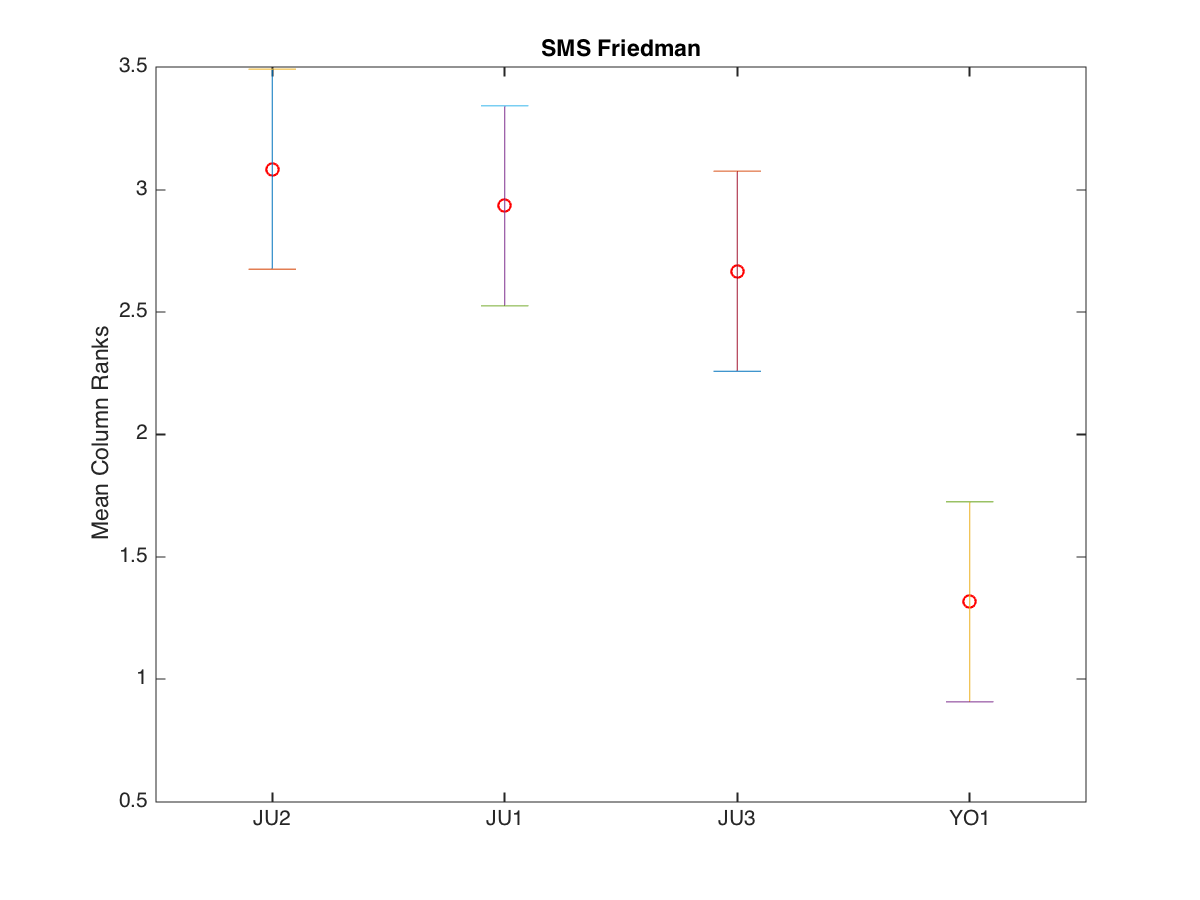

Friedman Test with Multiple Comparisons Results (p=0.05)

The Friedman test was run in MATLAB against the Fine summary data over the 30 queries.

Command: [c,m,h,gnames] = multcompare(stats, 'ctype', 'tukey-kramer','estimate', 'friedman', 'alpha', 0.05);

| Row Labels | JU1 | JU2 | JU3 | YO1 |

|---|---|---|---|---|

| q01 | 63.6 | 63.6 | 53.7 | 37.3 |

| q01_1 | 63.6 | 63.6 | 53.7 | 27.2 |

| q01_2 | 47.6 | 49.5 | 47.1 | 29.1 |

| q01_3 | 25.2 | 24.9 | 32.8 | 30.5 |

| q01_4 | 37.7 | 38.4 | 23.2 | 29.1 |

| q02 | 72.5 | 72.5 | 72 | 56.5 |

| q02_1 | 73.5 | 72.5 | 74 | 56 |

| q02_2 | 70.5 | 70.5 | 70.5 | 60.5 |

| q02_3 | 70 | 70 | 68.5 | 56.5 |

| q02_4 | 72.5 | 70 | 72.5 | 56 |

| q03 | 49 | 49 | 31.5 | 31.8 |

| q03_1 | 45 | 45 | 31.8 | 31.8 |

| q03_2 | 49 | 49 | 38.5 | 35.8 |

| q03_3 | 49 | 49 | 34.5 | 31.8 |

| q03_4 | 49 | 49 | 31.5 | 32 |

| q04 | 67.7 | 67.7 | 64.8 | 29.2 |

| q04_1 | 62.9 | 62.9 | 69.1 | 29.2 |

| q04_2 | 70.9 | 73.7 | 68.9 | 33.2 |

| q04_3 | 64.5 | 64.5 | 60 | 21.6 |

| q04_4 | 62.6 | 62.6 | 69.7 | 47.3 |

| q05 | 63 | 68 | 63 | 43.5 |

| q05_1 | 64 | 72 | 58.5 | 32 |

| q05_2 | 63 | 68 | 58.5 | 34 |

| q05_3 | 30.5 | 30.5 | 50 | 34 |

| q05_4 | 63 | 68 | 63 | 47.5 |

| q06 | 33.6 | 33.6 | 34.8 | 31.5 |

| q06_1 | 33.6 | 33.6 | 34.8 | 31.5 |

| q06_2 | 34.1 | 34.1 | 34.8 | 31.5 |

| q06_3 | 33.6 | 33.6 | 36.3 | 29.5 |

| q06_4 | 28.6 | 28.6 | 33.8 | 31.5 |

| TeamID | TeamID | Lowerbound | Mean | Upperbound | Significance |

|---|---|---|---|---|---|

| JU2 | JU1 | -0.6669 | 0.1500 | 0.9669 | FALSE |

| JU2 | JU3 | -0.4002 | 0.4167 | 1.2336 | FALSE |

| JU2 | YO1 | 0.9498 | 1.7667 | 2.5836 | TRUE |

| JU1 | JU3 | -0.5502 | 0.2667 | 1.0836 | FALSE |

| JU1 | YO1 | 0.7998 | 1.6167 | 2.4336 | TRUE |

| JU3 | YO1 | 0.5331 | 1.3500 | 2.1669 | TRUE |