2018:Multiple Fundamental Frequency Estimation & Tracking Results - MIREX Dataset

Contents

Introduction

These are the results for the 2018 running of the Multiple Fundamental Frequency Estimation and Tracking task on MIREX dataset. For background information about this task set please refer to the 2018:Multiple Fundamental Frequency Estimation & Tracking page.

General Legend

| Sub code | Submission name | Abstract | Contributors |

|---|---|---|---|

| CB1 | Silvet | Chris Cannam, Emmanouil Benetos | |

| CB2 | Silvet Live | Chris Cannam, Emmanouil Benetos |

Task 1: Multiple Fundamental Frequency Estimation (MF0E)

MF0E Overall Summary Results

Below are the average scores across 40 test files. These files come from 3 different sources: woodwind quintet recording of bassoon, clarinet, horn,flute and oboe (UIUC); Rendered MIDI using RWC database donated by IRCAM and a quartet recording of bassoon, clarinet, violin and sax donated by Dr. Bryan Pardo`s Interactive Audio Lab (IAL). 20 files coming from 5 sections of the woodwind recording where each section has 4 files ranging from 2 polyphony to 5 polyphony. 12 files from IAL, coming from 4 different songs ranging from 2 polyphony to 4 polyphony and 8 files from RWC synthesized midi ranging from 2 different songs ranging from 2 polphony to 5 polyphony.

Detailed Results

file /nema-raid/www/mirex/results/https://www.music-ir.org/mirex/results/2018/mf0/est_mirex/summary/task1.results.csv not found

Detailed Chroma Results

Here, accuracy is assessed on chroma results (i.e. all F0's are mapped to a single octave before evaluating)

| Precision | Recall | Accuracy | Etot | Esubs | Emiss | Efa | ||

|---|---|---|---|---|---|---|---|---|

| CB1 | 0.851 | 0.551 | 0.527 | 0.497 | 0.062 | 0.389 | 0.047 | |

| CB2 | 0.747 | 0.527 | 0.479 | 0.568 | 0.106 | 0.367 | 0.095 |

Individual Results Files for Task 1

CB1= Chris Cannam, Emmanouil Benetos

CB2= Chris Cannam, Emmanouil Benetos

Info about the filenames

The filenames starting with part* comes from acoustic woodwind recording, the ones starting with RWC are synthesized. The legend about the instruments are:

bs = bassoon, cl = clarinet, fl = flute, hn = horn, ob = oboe, vl = violin, cel = cello, gtr = guitar, sax = saxophone, bass = electric bass guitar

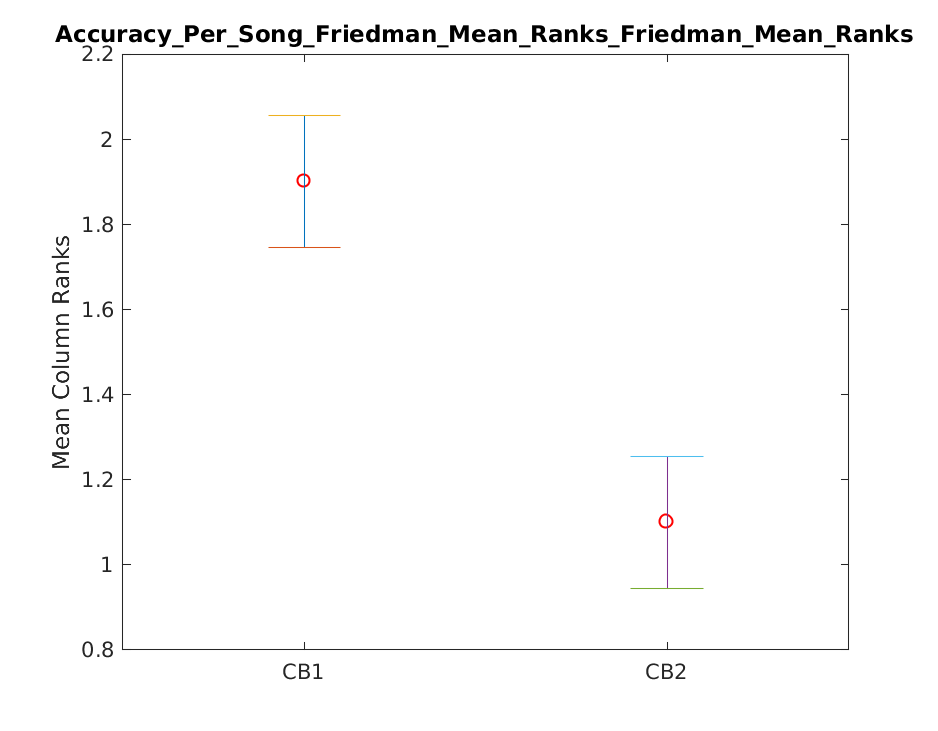

Friedman tests for Multiple Fundamental Frequency Estimation (MF0E)

The Friedman test was run in MATLAB to test significant differences amongst systems with regard to the performance (accuracy) on individual files.

Tukey-Kramer HSD Multi-Comparison

| TeamID | TeamID | Lowerbound | Mean | Upperbound | Significance |

|---|---|---|---|---|---|

| CB1 | CB2 | 0.4901 | 0.8000 | 1.1099 | TRUE |

Task 2:Note Tracking (NT)

NT Mixed Set Overall Summary Results

This subtask is evaluated in two different ways. In the first setup , a returned note is assumed correct if its onset is within +-50ms of a ref note and its F0 is within +- quarter tone of the corresponding reference note, ignoring the returned offset values. In the second setup, on top of the above requirements, a correct returned note is required to have an offset value within 20% of the ref notes duration around the ref note`s offset, or within 50ms whichever is larger.

A total of 34 files were used in this subtask: 16 from woodwind recording, 8 from IAL quintet recording and 6 piano.

| CB1 | CB2 | |

|---|---|---|

| Ave. F-Measure Onset-Offset | 0.3047 | 0.2064 |

| Ave. F-Measure Onset Only | 0.5029 | 0.3742 |

| Ave. F-Measure Chroma | 0.3207 | 0.2365 |

| Ave. F-Measure Onset Only Chroma | 0.5343 | 0.4276 |

Detailed Results

| Precision | Recall | Ave. F-measure | Ave. Overlap | |

|---|---|---|---|---|

| CB1 | 0.312 | 0.304 | 0.305 | 0.865 |

| CB2 | 0.201 | 0.230 | 0.206 | 0.862 |

Detailed Chroma Results

Here, accuracy is assessed on chroma results (i.e. all F0's are mapped to a single octave before evaluating)

| Precision | Recall | Ave. F-measure | Ave. Overlap | |

|---|---|---|---|---|

| CB1 | 0.329 | 0.320 | 0.321 | 0.860 |

| CB2 | 0.229 | 0.265 | 0.237 | 0.858 |

Results Based on Onset Only

| Precision | Recall | Ave. F-measure | Ave. Overlap | |

|---|---|---|---|---|

| CB1 | 0.525 | 0.493 | 0.503 | 0.720 |

| CB2 | 0.375 | 0.403 | 0.374 | 0.677 |

Chroma Results Based on Onset Only

| Precision | Recall | Ave. F-measure | Ave. Overlap | |

|---|---|---|---|---|

| CB1 | 0.558 | 0.524 | 0.534 | 0.700 |

| CB2 | 0.426 | 0.465 | 0.428 | 0.652 |

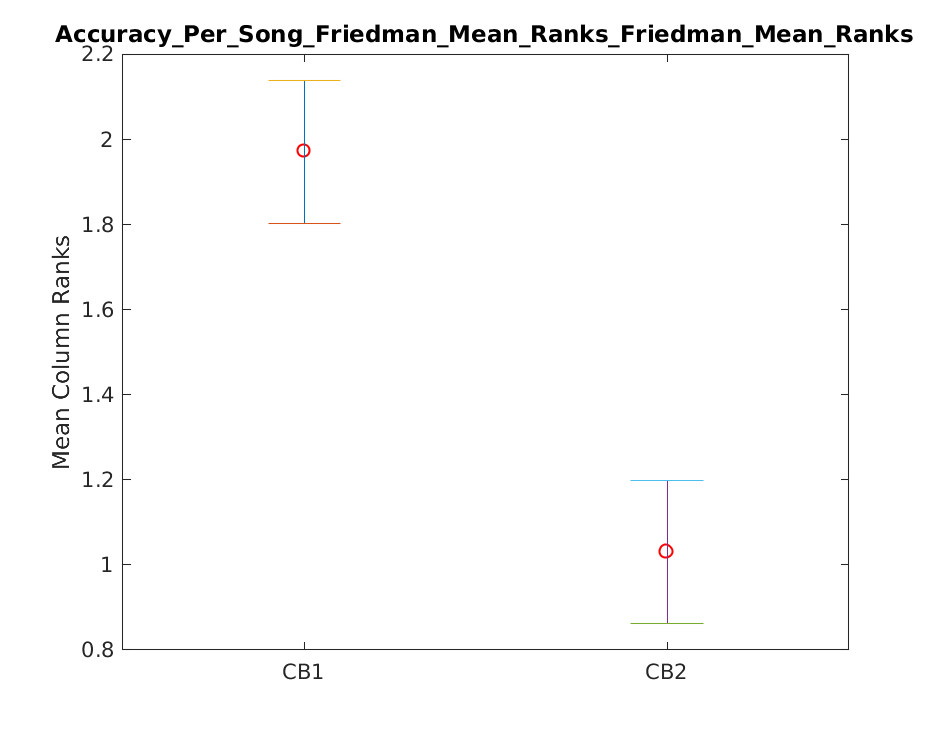

Friedman Tests for Note Tracking

The Friedman test was run in MATLAB to test significant differences amongst systems with regard to the F-measure on individual files.

Tukey-Kramer HSD Multi-Comparison for Task2

| TeamID | TeamID | Lowerbound | Mean | Upperbound | Significance |

|---|---|---|---|---|---|

| CB1 | CB2 | 0.6050 | 0.9412 | 1.2773 | TRUE |

NT Piano-Only Overall Summary Results

This subtask is evaluated in two different ways. In the first setup , a returned note is assumed correct if its onset is within +-50ms of a ref note and its F0 is within +- quarter tone of the corresponding reference note, ignoring the returned offset values. In the second setup, on top of the above requirements, a correct returned note is required to have an offset value within 20% of the ref notes duration around the ref note`s offset, or within 50ms whichever is larger. 6 piano recordings are evaluated separately for this subtask.

| CB1 | CB2 | KB1 | |

|---|---|---|---|

| Ave. F-Measure Onset-Offset | 0.2216 | 0.2085 | 0.5205 |

| Ave. F-Measure Onset Only | 0.7002 | 0.5642 | 0.6869 |

| Ave. F-Measure Chroma | 0.2343 | 0.2247 | 0.5245 |

| Ave. F-Measure Onset Only Chroma | 0.7103 | 0.5787 | 0.6875 |

Detailed Results

| Precision | Recall | Ave. F-measure | Ave. Overlap | |

|---|---|---|---|---|

| CB1 | 0.245 | 0.203 | 0.222 | 0.812 |

| CB2 | 0.228 | 0.195 | 0.209 | 0.797 |

| KB1 | 0.573 | 0.479 | 0.520 | 0.827 |

Detailed Chroma Results

Here, accuracy is assessed on chroma results (i.e. all F0's are mapped to a single octave before evaluating)

| Precision | Recall | Ave. F-measure | Ave. Overlap | |

|---|---|---|---|---|

| CB1 | 0.259 | 0.216 | 0.234 | 0.811 |

| CB2 | 0.245 | 0.210 | 0.225 | 0.792 |

| KB1 | 0.578 | 0.482 | 0.524 | 0.827 |

Results Based on Onset Only

| Precision | Recall | Ave. F-measure | Ave. Overlap | |

|---|---|---|---|---|

| CB1 | 0.746 | 0.666 | 0.700 | 0.572 |

| CB2 | 0.596 | 0.543 | 0.564 | 0.589 |

| KB1 | 0.753 | 0.636 | 0.687 | 0.745 |

Chroma Results Based on Onset Only

| Precision | Recall | Ave. F-measure | Ave. Overlap | |

|---|---|---|---|---|

| CB1 | 0.757 | 0.676 | 0.710 | 0.569 |

| CB2 | 0.611 | 0.557 | 0.579 | 0.586 |

| KB1 | 0.753 | 0.636 | 0.688 | 0.741 |

Individual Results Files for Task 2

CB1= Chris Cannam, Emmanouil Benetos

CB2= Chris Cannam, Emmanouil Benetos