2019:Patterns for Prediction Results

Contents

Introduction

In brief:

We look for

(1) Algorithms that take an excerpt of music as input (the prime), and output a predicted continuation of the excerpt.

(2) Additionally or alternatively, algorithms that take a prime and one or more continuations as input, and output the likelihood that each continuation is the genuine extension of the prime.

In more detail: One facet of human nature comprises the tendency to form predictions about what will happen in the future (Huron, 2006). Music, consisting of complex temporally extended sequences, provides an excellent setting for the study of prediction, and this topic has received attention from fields including but not limited to psychology (Collins, Tillmann, et al., 2014; Janssen, Burgoyne and Honing, 2017; Schellenberg, 1997; Schmukler, 1989), neuroscience (Koelsch et al., 2005), music theory (Gjerdingen, 2007; Lerdahl & Jackendoff, 1983; Rohrmeier & Pearce, 2018), music informatics (Conklin & Witten, 1995; Cherla et al., 2013), and machine learning (Elmsley, Weyde, & Armstrong, 2017; Hadjeres, Pachet, & Nielsen, 2016; Gjerdingen, 1989; Roberts et al., 2018; Sturm et al., 2016). In particular, we are interested in the way exact and inexact repetition occurs over the short, medium, and long term in pieces of music (Margulis, 2014; Widmer, 2016), and how these repetitions may interact with "schematic, veridical, dynamic, and conscious" expectations (Huron, 2006) in order to form a basis for successful prediction.

We call for algorithms that may model such expectations so as to predict the next musical events based on given, foregoing events (the prime). We invite contributions from all fields mentioned above (not just pattern discovery researchers), as different approaches may be complementary in terms of predicting correct continuations of a musical excerpt. We would like to explore these various approaches to music prediction in a MIREX task. For subtask (1) above (see "In brief"), the development and test datasets will contain an excerpt of a piece up until a cut-off point, after which the algorithm is supposed to generate the next N musical events up until 10 quarter-note beats, and we will quantitatively evaluate the extent to which an algorithm's continuation corresponds to the genuine continuation of the piece. For subtask (2), in addition to containing a prime, the development and test datasets will also contain continuations of the prime, one of which will be genuine, and the algorithm should rate the likelihood that each continuation is the genuine extension of the prime, which again will be evaluated quantitatively.

What is the relationship between pattern discovery and prediction? The last five years have seen an increasing interest in algorithms that discover or generate patterned data, leveraging methods beyond typical (e.g., Markovian) limits (Collins & Laney, 2017; MIREX Discovery of Repeated Themes & Sections task; Janssen, van Kranenburg and Volk, 2017; Ren et al., 2017; Widmer, 2016). One of the observations to emerge from the above-mentioned MIREX pattern discovery task is that an algorithm that is "good" at discovering patterns ought to be extendable to make "good" predictions for what will happen next in a given music excerpt (Meredith, 2013). Furthermore, evaluating the ability to predict may provide a stronger (or at least complementary) evaluation of an algorithm's pattern discovery capabilities, compared to evaluating its output against expert-annotated patterns, where the notion of "ground truth" has been debated (Meredith, 2013).

Contribution

...

For a more detailed introduction to the task, please see 2019:Patterns for Prediction.

Datasets and Algorithms

The Patterns for Prediction Development Dataset (PPDD-Jul2018) has been prepared by processing a randomly selected subset of the Lakh MIDI Dataset (LMD, Raffel, 2016). It has audio and symbolic versions crossed with monophonic and polyphonic versions. The audio is generated from the symbolic representation, so it is not "expressive". The symbolic data is presented in CSV format. For example,

20,64,62,0.5,0 20.66667,65,63,0.25,0 21,67,64,0.5,0 ...

would be the start of a prime where the first event had ontime 20 (measured in quarter-note beats -- equivalent to bar 6 beat 1 if the time signature were 4-4), MIDI note number (MNN) 64, estimated morphetic pitch number 62 (see p. 352 from Collins, 2011 for a diagrammatic explanation; for more details, see Meredith, 1999), duration 0.5 in quarter-note beats, and channel 0. Re-exports to MIDI are also provided, mainly for listening purposes. We also provide a descriptor file containing the original Lakh MIDI Dataset id, the BPM, time signature, and a key estimate. The audio dataset contains all these files, plus WAV files. Therefore, the audio and symbolic variants are identical to one another, apart from the presence of WAV files. All other variants are non-identical, although there may be some overlap, as they were all chosen from LMD originally.

The provenance of the Patterns for Prediction Test Dataset (PPTD) will not be disclosed, but it shares simiarlity with LMD and not from LMD, if you are concerned about overfitting.

There are small (100 pieces), medium (1,000 pieces), and large (10,000 pieces) variants of each dataset, to cater to different approaches to the task (e.g., a point-set pattern discovery algorithm developer may not want/need as many training examples as a neural network researcher). Each prime lasts approximately 35 sec (according to the BPM value in the original MIDI file) and each continuation covers the subsequent 10 quarter-note beats. We would have liked to provide longer primes (as 35 sec affords investigation of medium- but not really long-term structure), but we have to strike a compromise between ideal and tractable scenarios.

Submissions to the symMono and symPoly variants of the tasks are listed in Table 1. There were no submissions to the audMono or audPoly variants of the tasks this year. The task captains prepared a first-order Markov model (MM) over a state space of measure beat and key-centralized MIDI note number. This enabled evaluation of the implicit subtask, and can also serve as a point of comparison for the explicit task. It should be noted, however, that this model had access to the full song/piece – not just the prime – so it is at an advantage compared to EN1 and FC1 in the explicit task.

| Sub code | Submission name | Abstract | Contributors |

|---|---|---|---|

| Task Version | symMono - Task 1 | ||

| TD1 | CopyForward | Timothy de Reuse | |

| Task Version | symPoly - Task 1 | ||

| TD1 | CopyForward | Timothy de Reuse | |

| Task Version | symMono - Task 2 | ||

| EP1 | GenDetect | Jeff Ens, Philippe Pasquier | |

| Task Version | symPoly Task 2 | ||

| EP1 | GenDetect | Jeff Ens, Philippe Pasquier | |

| YB2 | MLM | Adrien Ycart, Emmanouil Benetos | |

| YB5 | MLM | Adrien Ycart, Emmanouil Benetos |

Table 1. Algorithms submitted to Patterns for Prediction 2019.

Results

We measure the performance of an algorithm to 1) predict a continuation, given a prime (explicit task), and 2) decide which of two versions is the true or foil continuation, given a prime (implicit task). To evaluate performance at the explicit task, we compare the true continuation to the generated continuation, and measure how many pitch-onset pairs are correctly predicted at various time intervals after the last note of the prime. To evaluate performance at the implicit task, we measure accuracy as the number of correct decisions, divided by the total amount of decisions. (For mathematical definitions of the various metrics, please see 2019:Patterns_for_Prediction#Evaluation_Procedure.)

For reference purposes, we also include results of the submissions from 2018 (BachProp and Seq2SeqP4P).

Figures

symMono

Explicit task: generate music given a prime

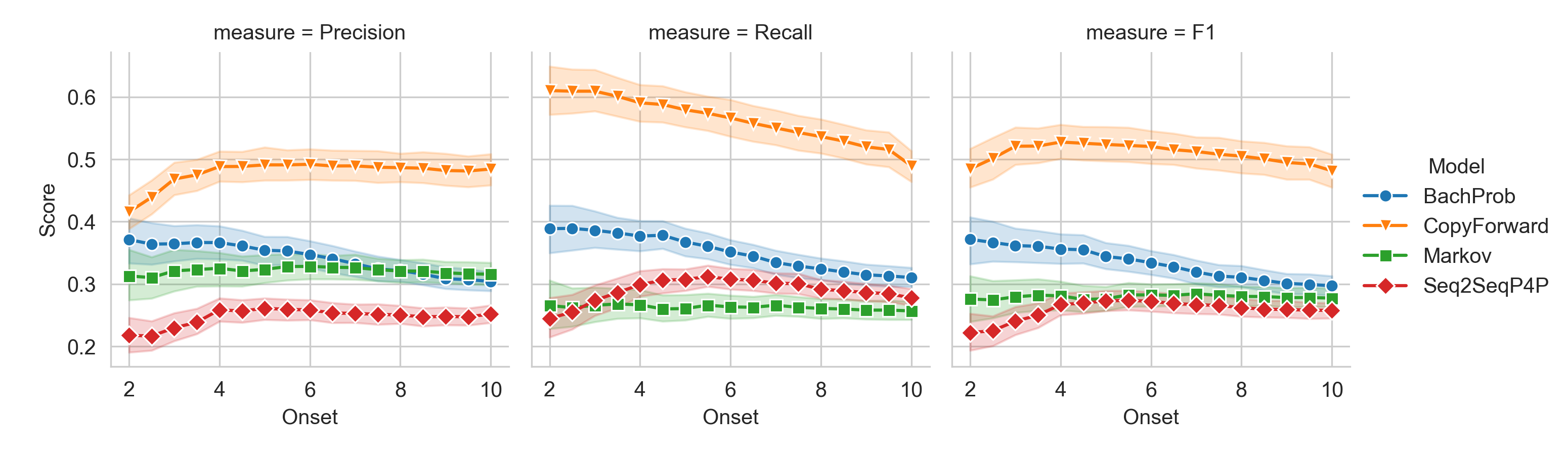

Figure 1. Precision, recall and F1 (cardinality score) in quarter note onsets from prediction start.

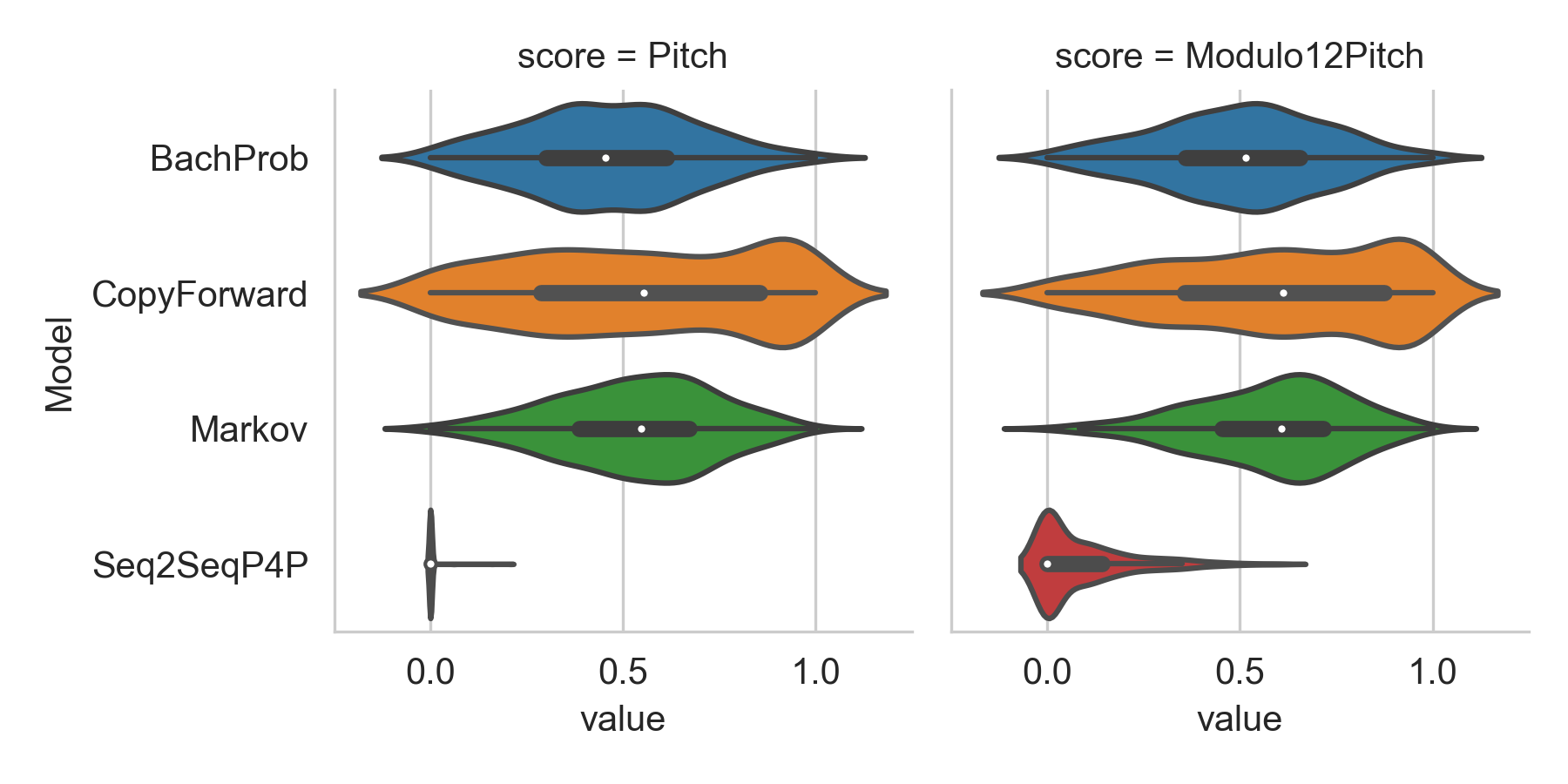

Figure 2. Pitch overlap of the algorithmically generated continuations with the true continuation.

symPoly

Explicit task: generate music given a prime

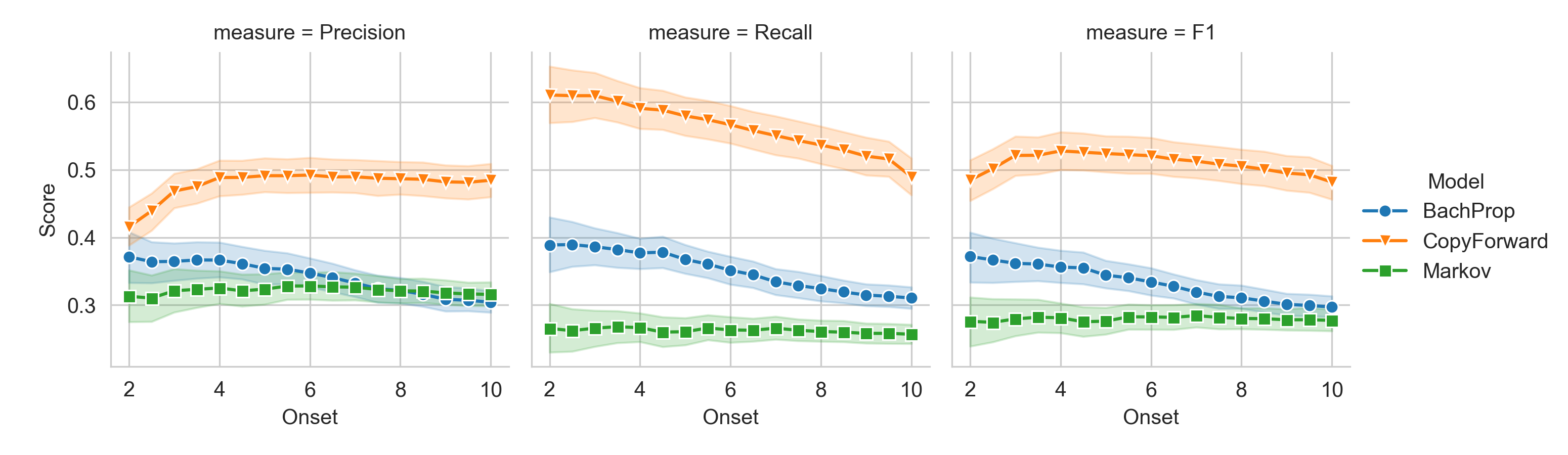

Figure 3. Precision, recall and F1 (cardinality score) in quarter note onsets from prediction start.

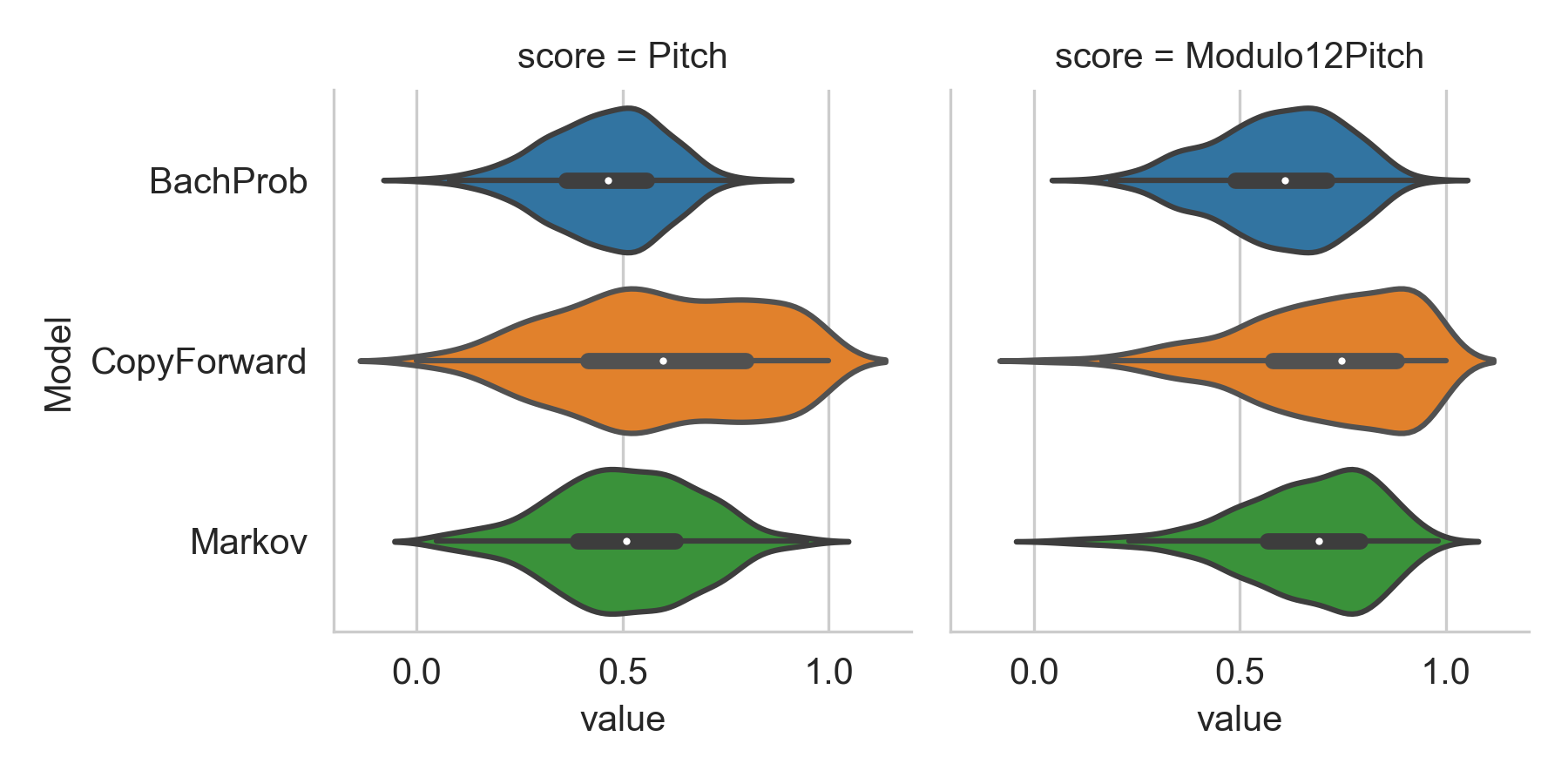

Figure 4. Pitch overlap of the algorithmically generated continuations with the true continuation.

Tables

symMono

Explicit task: generate music given a prime

| Modulo12Pitch | |||

|---|---|---|---|

| mean | median | std | |

| Model | |||

| BachProp | 0.502 | 0.516 | 0.219 |

| CopyForward | 0.596 | 0.612 | 0.292 |

| Markov | 0.583 | 0.608 | 0.195 |

| Seq2SeqP4P | 0.087 | 0.000 | 0.121 |

Table 2. Pitch overlap of the algorithmic continuations with the true continuation - mean, median and standard deviation.

symPoly

Explicit task: generate music given a prime

| Pitch | |||

|---|---|---|---|

| mean | median | std | |

| Model | |||

| BachProp | 0.455 | 0.466 | 0.139 |

| CopyForward | 0.594 | 0.598 | 0.238 |

| Markov | 0.506 | 0.508 | 0.176 |

Table 3. Pitch overlap of the algorithmic continuations with the true continuation - mean, median and standard deviation.

symMono/symPoly

Implicit task: discriminate true and foil continuation

| Observations | Accuracy | Mean Probability | Variance | ||

|---|---|---|---|---|---|

| model | data | ||||

| GenDetect | mono | 500 | 1.000 | 1.000 | 0.000 |

| BachProp | mono | 499 | 0.844 | 0.498 | 0.006 |

| GenDetect | poly | 500 | 0.998 | 0.992 | 0.002 |

| BachProp | poly | 499 | 0.916 | 0.519 | 0.006 |

| MLM(2) | poly | 499 | 0.703 | 0.527 | 0.004 |

| MLM(5) | poly | 499 | 0.731 | 0.554 | 0.012 |

Table 4. Discrimination scores of the submitted algorithms.

Discussion

CopyForward (a method based on geometric pattern discovery) significantly outperforms the baseline Markov model and BachProp (a method based on recurrent neural networks) on the explicit subtask. The abstract for CopyForward suggests this relatively high level of performance is due to successful modeling of dynamic expectancies – that is, it uses information from the prime exclusively (no training data) to predict the content of the continuation. BachProp, on the other hand, attempts to model schematic (or corpus-based) expectancies too, combining information from the prime with information from a training process. It remains to be seen whether BachProp (or deep-learning approaches in general) could improve upon CopyForward's continuations when the latter's dependence on modeling of dynamic expectancies leads to unsuccessful predictions.

All algorithms submitted this year to the implicit subtask achieved significantly-above-chance performance. MLM employed an LSTM and GenDetect employed a gradient boosting classifier. GenDetect performed significantly better than previous and current submissions on this task, discriminating between all monophonic true and foil continuations correctly, and all but one polyphonic true and foil continuations. If we run this subtask again next year, we probably ought to identify a different, more sophisticated algorithm for generating the foils.